In case you are wondering where the megs of Web performance information that I have posted has come from, I have decided to move my articles into the content-management system I call a blog, so that I can more effectively manage them.

It is also in-keeping with my philosophy of promoting Web performance.

Enjoy the new stuff!

Month: April 2005

Performance Improvement From Caching and Compression

This paper is an extension of the work done for another article that highlighted the performance benefits of retrieving uncompressed and compressed objects directly from the origin server. I wanted to add a proxy server into the stream and determine if proxy servers helped improve the performance of object downloads, and by how much.

Using the same series of objects in the original compression article[1], the CURL tests were re-run 3 times:

- Directly from the origin server

- Through the proxy server, to load the files into cache

- Through the proxy server, to avoid retrieving files from the origin.[2]

eries of three tests was repeated twice: once for the uncompressed files, and then for the compressed objects.[3]

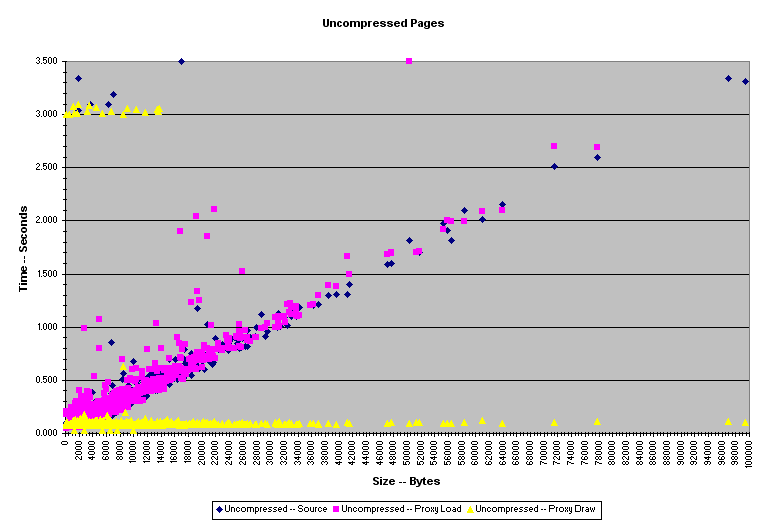

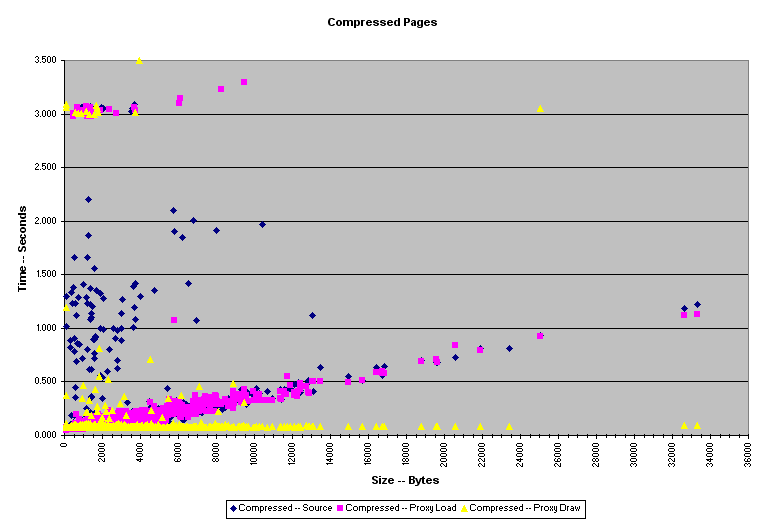

As can be seen clearly in the plots below, compression caused web page download times to improve greatly, when the objects were retrieved from the source. However, the performance difference between compressed and uncompressed data all but disappears when retrieving objects from a proxy server on a corporate LAN.

Instead of the linear growth between object size and download time seen in both of the retrieval tests that used the origin server (Source and Proxy Load data), the Proxy Draw data clearly shows the benefits that accrue when a proxy server is added to a network to assist with serving HTTP traffic.

Uncompressed Pages

| MEAN DOWNLOAD TIME | |

| Total Time Uncompressed — No Proxy | 0.256 |

| Total Time Uncompressed — Proxy Load | 0.254 |

| Total Time Uncompressed — Proxy Draw | 0.110 |

Compressed Pages

| MEAN DOWNLOAD TIME | |

| Total Time Compressed — No Proxy | 0.181 |

| Total Time Compressed — Proxy Load | 0.140 |

| Total Time Compressed — Proxy Draw | 0.104 |

The data above shows just how much of an improvement is gained by adding a local proxy server, explicit caching descriptions and compression can add to a Web site. For sites that do force a great of requests to be returned directly to the origin server, compression will be of great help in reducing bandwidth costs and improving performance. However, by allowing pages to be cached in local proxy servers, the difference between compressed and uncompressed pages vanishes.

Conclusion

Compression is a very good start when attempting to optimize performance. The addition of explicit caching messages in server responses which allow proxy servers to serve cached data to clients on remote local LANs can improve performance to even a greater extent than compression can. These two should be used together to improve the overall performance of Web sites.

[1] The test set was made up of the 1952 HTML files located in the top directory of the Linux Documentation Project HTML archive.

[2] All of the pages in these tests announced the following server response header indicating its cacheability: Cache-Control: max-age=3600

[3] A note on the compressed files: all compression was performed dynamically by mod_gzip for Apache/1.3.27.

Compressing Output From PHP

A little-used or discussed feature of PHP is the ability to compress output from the scripts using GZIP for more efficient transfer to requesting clients. By automatically detecting the ability of the requesting clients to accept and interpret GZIP encoded HTML, PHP can decrease the size of files transferred to the client by 60% to 80%.

Configuring PHP

The configuration needed to make this work is simple. I use a Linux distro that relies on RPMS, so I check for the following two packages:

- zlib

- zlib-devel

For those not familiar with zlib, it is a highly efficient, open-source compression library. This library is used by PHP uses to compress the output sent to the client.

Compile PHP4 with your favourite ./configure statement. I use the following:

Apache/1.3.x ./configure --with-apxs=/usr/local/apache/bin/apxs --with-zlib Apache/2.0.x ./configure --with-apxs2=/usr/local/apache2/bin/apxs --with-zlib

After doing make && make install, PHP should be ready to go as a dynamic Apache module. Now, you have to make some modifications to the php.ini file. This is usually found in /usr/local/lib, but if it’s not there, don’t panic; you will find some php.ini* files in the directory where you unpacked PHP. Simply copy one of those to /usr/local/lib and rename it php.ini.

Within php.ini, some modifications need to be made to switch on the GZIP compression detection and encoding. There are two methods to do this.

Method 1: output_buffering = On output_handler = ob_gzhandler zlib.output_compression = Off

Method 2: output_buffering = Off output_handler = zlib.output_compression = On

Once this is done, PHP will automatically detect if the requesting client accepts GZIP encoding, and will then buffer the output through the gzhandler function to dynamically compress the data sent to the client.

So?

The winning situation here is that for an expenditure of $0 (except your time) and a tiny bit more server overhead (you’re probably still using fewer resources than if you were running ASP on IIS!), you will now be sending much smaller, dynamically generated html documents to your clients, reducing your bandwidth usage and the amount of time it takes to download the files.

How much of a size reduction is achieved? Well, I ran a test on my Web server, using WGET to retrieve the file. The configuration and results of the test are listed below.

| Method 0: No Compression wget www.pierzchala.com/resume.php |

File Size: 9415 bytes |

| Method 1: ob_gzhandler wget –header=”Accept-Encoding: gzip,*” www.pierzchala.com/resume.php |

File Size: 3529 bytes |

| Method 2: zlib.output_compression wget –header=”Accept-Encoding: gzip,*” www.pierzchala.com/resume.php |

File Size: 3584 bytes |

You will have to experiment with the method that give the most efficient balance between file size and overhead and processing time on your server.

A 62% reduction in transferred file size without affecting the quality of the data sent to the client is a pretty good return for 10 minutes of work. I recommend including this procedure in all of your future PHP builds.

mod-gzip Compile Instructions

The last time I attempted to compile mod_gzip into Apache, I found that the instructions for doing so were not documented clearly on the project page. After a couple of failed attempts, I finally found the instructions buried at the end of the ChangeLog document.

I present the instructions here to preserve your sanity.

Before you can actually get mod_gzip to work, you have to uncomment it in the httpd.conf file module list (Apache 1.3.x) or add it to the module list (Apache 2.0.x).

Now there are two ways to build mod_gzip: statically compiled into Apache and a DSO-File for mod_so. If you want to compile it statically into Apache, just copy the source to Apache src/modules directory and there into a subdirectory named ‘gzip’. You can activate it via a parameter of the configure script.

./configure --activate-module=src/modules/gzip/mod_gzip.a make make install

This will build a new Apache with mod_gzip statically built in.

The DSO-Version is much easier to build.

make APXS=/path/to/apxs make install APXS=/path/to/apxs /path/to/apachectl graceful

The apxs script is normally located inside the bin directory of Apache.

Compressing Web Output Using mod_gzip for Apache 1.3.x and 2.0.x

Web page compression is not a new technology, but it has just recently gained higher recognition in the minds of IT administrators and managers because of the rapid ROI it generates. Compression extensions exist for most of the major Web server platforms, but in this article I will focus on the Apache and mod_gzip solution.

The idea behind GZIP-encoding documents is very straightforward. Take a file that is to be transmitted to a Web client, and send a compressed version of the data, rather than the raw file as it exists on the filesystem. Depending on the size of the file, the compressed version can run anywhere from 50% to 20% of the original file size.

In Apache, this can be achieved using a couple of different methods. Content Negotiation, which requires that two separate sets of HTML files be generated — one for clients that can handle GZIP-encoding, and one for those who can’t — is one method. The problem with this solution should be readily apparent: there is no provision in this methodology for GZIP-encoding dynamically-generated pages.

The more graceful solution for administrators who want to add GZIP-encoding to Apache is the use of mod_gzip. I consider it one of the overlooked gems for designing a high-performance Web server. Using this module, configured file types — based on file extension or MIME type — will be compressed using GZIP-encoding after they have been processed by all of Apache’s other modules, and before they are sent to the client. The compressed data that is generated reduces the number of bytes transferred to the client, without any loss in the structure or content of the original, uncompressed document.

mod_gzip can be compiled into Apache as either a static or dynamic module; I have chosen to compile it as a dynamic module in my own server (more compile instructions here). The advantage of using mod_gzip is that this method requires that nothing be done on the client side to make it work. All current browsers — Mozilla, Opera, and even Internet Explorer — understand and can process GZIP-encoded text content.

On the server side, all the server or site administrator has to do is compile the module, edit the appropriate configuration directives that were added to the httpd.conf file, enable the module in the httpd.conf file, and restart the server. In less than 10 minutes, you can be serving static and dynamic content using GZIP-encoding without the need to maintain multiple codebases for clients that can or cannot accept GZIP-encoded documents.

When a request is received from a client, Apache determines if mod_gzip should be invoked by noting if the “Accept-Encoding: gzip” HTTP request header has been sent by the client. If the client sends the header, mod_gzip will automatically compress the output of all configured file types when sending them to the client.

This client header announces to Apache that the client will understand files that have been GZIP-encoded. mod_gzip then processes the outgoing content and includes the following server response headers.

Content-Type: text/html Content-Encoding: gzip

These server response headers announce that the content returned from the server is GZIP-encoded, but that when the content is expanded by the client application, it should be treated as a standard HTML file. Not only is this successful for static HTML files, but this can be applied to pages that contain dynamic elements, such as those produced by Server-Side Includes (SSI), PHP, and other dynamic page generation methods. You can also use it to compress your Cascading Stylesheets (CSS) and plain text files. As well, a whole range of application file types can be compressed and sent to clients. My httpd.conf file sets the following configuration for the file types handled by mod_gzip:

mod_gzip_item_include mime ^text/.* mod_gzip_item_include mime ^application/postscript$ mod_gzip_item_include mime ^application/ms.*$ mod_gzip_item_include mime ^application/vnd.*$ mod_gzip_item_exclude mime ^application/x-javascript$ mod_gzip_item_exclude mime ^image/.*$

This allows Microsoft Office and Postscript files to be GZIP-encoded, while not affecting PDF files. PDF files should not be GZIP-encoded, as they are already compressed in their native format, and compressing them leads to issues when attempting to display the files in Adobe Acrobat Reader.[1] For the paranoid system administrator, you may want to explicitly exclude PDF files.

mod_gzip_item_exclude mime ^application/pdf$

Another side-note is that nothing needs to be done to allow the GZIP-encoding of OpenOffice (and presumably, StarOffice) documents. Their MIME-type is already set to text-plain, allowing them to be covered by one of the default rules.

How beneficial is sending GZIP-encoded content? In some simple tests I ran on my Web server using WGET, GZIP-encoded documents showed that even on a small Web server, there is the potential to produce a substantial savings in bandwidth usage.

| http://www.pierzchala.com/bio.html | Uncompressed File Size: 3122 bytes |

| http://www.pierzchala.com/bio.html | Compressed File Size: 1578 bytes |

| http://www.pierzchala.com/compress/homepage2.html | Uncompressed File Size: 56279 bytes |

| http://www.pierzchala.com/compress/homepage2.html | Compressed File Size: 16286 bytes |

Server administrators may be concerned that mod_gzip will place a heavy burden on their systems as files are compressed on the fly. I argue against that, pointing out that this does not seem to concern the administrators of Slashdot, one of the busiest Web servers on the Internet, who use mod_gzip in their very high-traffic environment.

The mod_gzip project page for Apache 1.3.x is located at SourceForge. The Apache 2.0.x version is available from here.

Long Week…off to read for a while…

I have a whole bunch of new books I will be ploughing through this weekend, between being daddy and helping out around the yard.

With any luck, I will get through them this weekend….

Siebel: To buy, or not to buy…ask Oracle!

Oracle to buy Siebel?

1) Siebel sucks.

2) Oracle blows.

Won’t this produce a null company?

—-

Scoble notes.

Music: Guilty Pleasures

Ok, I am forced to admit this, thanks to the team from Apple Matters, that I have a copy of the BareNaked Ladies doing the theme from the RoadRunner Cartoons.

As for the New Kids on the Block: there is a 12-step program to help folks with that, as well as BNL doing New Kid on the Block on Gordon.

Apache: Your host is an idiot

Ok, I broke my Web server and I didn’t even notice. I broke it so badly that when it re-started, it didn’t even create an access_log. I noticed it a few minutes ago, switched over to my backup Web machine, fixed the problem, and re-launched the primary Web server.

What did I do? I removed the default cURL RPM that came with FC3 and replaced it with cURL compiled from source.

Unfortunately, PHP couldn’t find libcurl.

I am amazed the server was still running in any form.

Off to run some more tests…UGH.