Up to this point, the series has focused on the mundane world of calculating statistical values in order to represent your Web performance data in some meaningful way. Now we step into the more exciting (I lead a sheltered life) world of analyzing the data to make some sense from it.

Up to this point, the series has focused on the mundane world of calculating statistical values in order to represent your Web performance data in some meaningful way. Now we step into the more exciting (I lead a sheltered life) world of analyzing the data to make some sense from it.

When companies sign up with a Web performance company, it has been my experience that the first thing that they want to do is get in there and push all the buttons and bounce on the seats. This usually involves setting up a million different measurements, and then establishing alerting thresholds for every single one of them that is of critical importance, emailed to the pagers of the entire IT team all the time.

Well interesting, it is also a great way for people to begin to actually ignore the data because:

- It’s not telling them what they need to know

- It’s telling them stuff when they don’t need to know it.

When I speak to a company for the first time, I often ask what their key online business processes are. I usually get either stunned silence or “I don’t know” as a response. Seriously, what has been purchased is a tool, some new gadget that will supposedly make life better; but no thought has been put into how to deploy and make use of the data coming in.

I have the luxury of being able to concentrate on one set of data all the time. In most environments, the flow of data from systems, network devices, e-mail updates, patches, business data simply becomes noise to be ignored until someone starts complaining that something is wrong. Web performance data becomes another data flow to react to, not act on.

So how do you begin to corral the beast of Web performance data? Start with the simplest question: what do we NEED to measure?

If you talked to IT, Marketing and Business Management, they will likely come up with three key areas that need to be measured:

- Search

- Authentication

- Shopping Cart

Technology folks say, but that doesn’t cover the true complexity of our relational, P2P, AJAX-powered, social media, tagging Web 2.0 site.

Who cares! The three items listed above pay the bills and keep the lights on. If one of these isn’t working, you fix it now, or you go home.

Now, we have three primary targets. We’re set to start setting up alerts, and stuff, right?

Nope. You don’t have enough information yet.

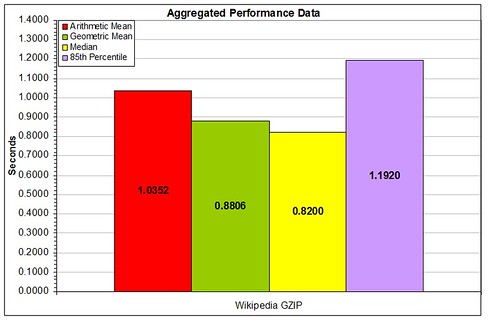

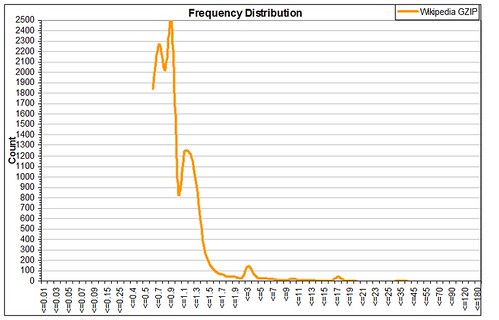

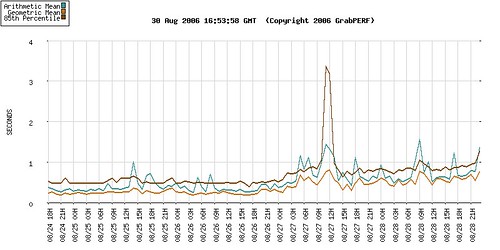

This is your measurement after the first day. This gives you enough information to do all those bright and shiny things that you’ve heard your new Web performance tool can do, doesn’t it?

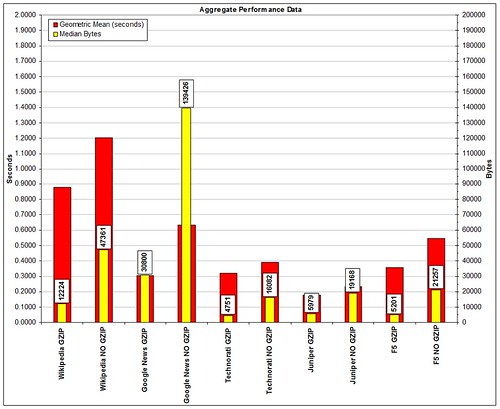

Here’s the same measurement after 4 days. Subtle but important changes have occurred. The most important of these is that the first day that data was gathered happened to be on a Friday night. Most sites would agree that the performance on a Friday night is far different than what you would find on a Monday morning. Monday morning shows this site showing a noticeable performance shift upward.

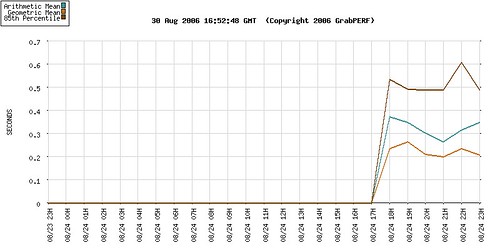

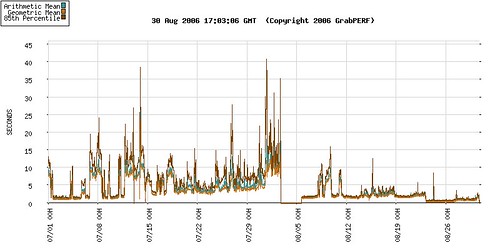

And what do you do when your performance looks like this?

Baselining is the ability to predict the performance of your site under normal circumstances on an ongoing basis. This is based on the knowledge that comes from understanding how the site has performed in the past, as well as how it has behaved under situations of abnormal behavior. Until you can predict how your site should behave, you can begin to understand why it behaves the way it does.

Focusing on the three key transaction paths or business processes listed above helps you and your team wrap your head around what the site is doing right now. Once a baseline for the site’s performance exists, then you can begin to benchmark the performance of your site by comparing it to others doing the same business process.