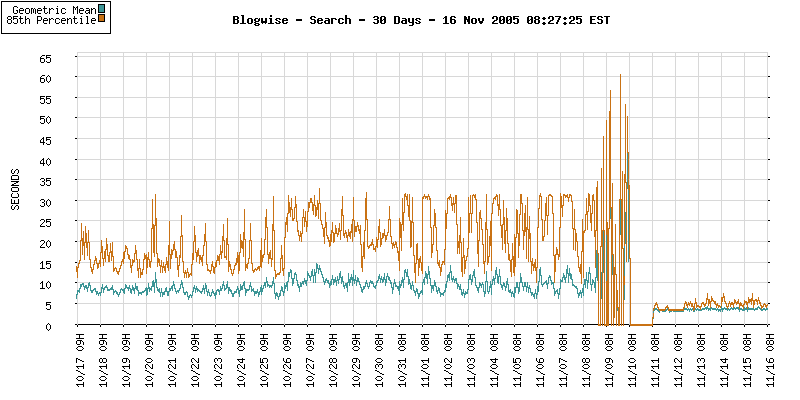

Blogwise had a slight server issue earlier this month. Sven, the great guy who runs the service, posted a very open statement of what happened.

I’m very sorry if you tried to access Blogwise over the last four days and got either no service, or an appallingly poor connection. It looks like there was a multiple server failure which, considering I only have a few, was quite damaging.

Events were made worse by my being out of the country at the time (ironically, my first break since Blogwise was started over three years ago). Although I had somebody monitoring the servers, and I knew fairly early that something was up, my lack of access to a computer and awkward time zones made fixing things extremely difficult.

The site should now be running again. If you had assumed Blogwise had closed suddenly, it hasn’t. I’m still here and as committed as ever – we just had the most unfortunate event at the worst possible time. This event has highlighted all sorts of vulnerabilities in the service, and has acted as a grim reminder of the need for redundancy and proper support.

It’s going to be tough to build a more reliable infrastructure, and to continue developing Blogwise. I don’t have the luxury of investors, nor a great deal of revenue from advertising, but the need to resolve this has been made painfully obvious and time and money will be found.

Finally, a thankyou to you all for your continued support, your patience and your understanding. With the site back up, I’m going to enjoy my last three days in Chicago and look forward to getting back into the flow of things this weekend.

Sven, 12 Nov 2005

On the upside, performance has improved dramatically!

Great work Sven…and I feel your pain.